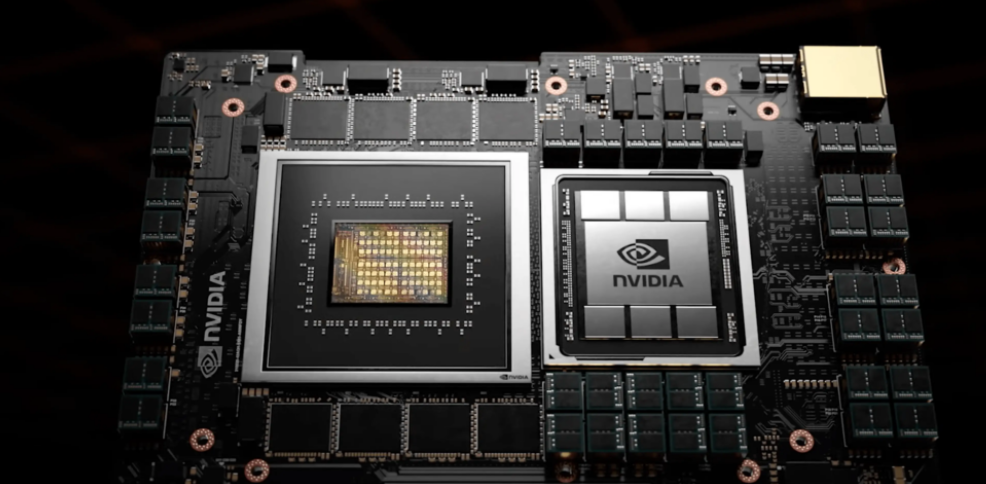

Solutions for Implementing NVIDIA GPU Functionality Using FPGAs

Implementing the core functionalities of NVIDIA GPUs using FPGAs is technically feasible, but requires balancing performance, development difficulty, and application scenarios. Here's a key analysis:

1. Architectural Differences and Implementation Challenges

- Parallelism Model: GPUs employ a SIMD architecture (e.g., CUDA cores), suitable for large-scale data parallelism; FPGAs achieve flexible parallelism through hardware-level circuit reconfiguration, but routing resources occupy 70-90% of the silicon area, limiting computational density38.

- Development Complexity: GPU programming relies on high-level frameworks like CUDA, whereas FPGAs require hardware description languages (e.g., Verilog) or OpenCL, leading to longer development cycles and requiring specialized hardware knowledge28.

- Performance Bottlenecks: FPGA clock frequencies are limited by programmable interconnect structures, typically lower than GPUs; video memory bandwidth (GB/s level) is also significantly inferior to GPU HBM (TB/s level)48.

2. Potential Advantage Scenarios

- Customized Computing: FPGAs can optimize data paths for specific algorithms (e.g., low-precision neural networks), achieving higher energy efficiency16.

- Low-Latency Requirements: FPGA hardware pipelines can achieve nanosecond-level responses, suitable for high-frequency trading or real-time control48.

- Dynamic Reconfiguration Capability: FPGAs can reconfigure logic units to adapt to algorithm iterations, whereas GPUs require a fixed architecture69.

3. Implementation Solutions Reference

- OpenCL Programming: Generate FPGA hardware descriptions by adding pragma directives in C/C++, lowering the development barrier2.

- Open-Source Projects: Existing FPGA-based graphics accelerator implementations (e.g., Gitee open-source code) can be referenced for their rendering pipeline design11.

- Hybrid Architecture: Combine FPGA's custom computing units with GPU's general-purpose parallel capabilities, for example, using FPGAs to accelerate preprocessing and GPUs to handle main computations79.

4. Limitations

- Ecosystem Gap: GPUs have mature AI toolchains (e.g., TensorRT), while the FPGA ecosystem is fragmented with weaker software support8.

- Cost Issues: FPGA chip unit prices are high, making them suitable for small-batch, high-value scenarios, but less cost-effective than GPUs for large-scale deployment68.

In summary, FPGAs are suitable for replacing specific GPU functionalities (e.g., dedicated inference acceleration), but fully replicating GPU's general-purpose computing capabilities requires overcoming architectural and ecosystem barriers34.

Below are representative cases and technical solutions for implementing NVIDIA GPU core functionalities with FPGAs, covering graphics rendering, AI inference, and hybrid architecture optimization:

🖥️ I. Graphics Rendering Acceleration

- Open-Source Graphics Accelerator Implementation

- Development of an FPGA-based GPU rendering pipeline, supporting basic rasterization and shading computations, optimizing real-time graphics processing flow through hardware pipelines. The open-source project has released complete code and documentation on Gitee (including ZYNQ platform adaptation)37.

- Key Technologies: Parallel pixel processing units, custom video memory controllers, geometric transformation hardware acceleration modules.

🧠 II. AI Inference Acceleration Alternatives

-

Sparse Neural Network Inference Optimization

- Intel Stratix 10 FPGAs, for pruned and ternary neural networks, achieved significant performance improvements compared to NVIDIA Titan X GPUs:

- Pruned ResNet: 10% performance increase

- Int6 precision models: 50% performance increase

- Binarized networks: 5.4x performance increase6.

- Core Mechanisms: Zero-Skipping hardware logic and dynamic data compression pathways.

- Intel Stratix 10 FPGAs, for pruned and ternary neural networks, achieved significant performance improvements compared to NVIDIA Titan X GPUs:

-

Customized CNN Accelerator

- GitHub open-source projects (e.g.,

cnn_hardware_acclerator_for_fpga) provide parameterized Verilog implementations, supporting hardware parallelization design for convolutional layers, deployed to FPGA platforms via Xilinx Vivado. Measured latency is lower than GPU batch processing mode8. - Advantage Scenarios: Low-power edge device image classification, SAR target recognition.

- GitHub open-source projects (e.g.,

⚡ III. Hybrid Architecture Collaborative Computing

-

FPGA+GPU Heterogeneous Inference System

- Task Offloading Design: FPGAs handle preprocessing (e.g., image decoding, data cleaning), while GPUs execute complex model inference (e.g., MacBERT). Case studies show latency reduced to below 50ms in voice assistant scenarios10.

- Resource Binding Mechanism: Dynamically allocate FPGA resources to high-frequency small models (TinyBERT), with GPUs focusing on large model computations, improving system throughput by 40%10.

-

Cloud-Based Heterogeneous Computing Platform

- Microsoft Azure deploys FPGA clusters for AI services: FPGAs accelerate real-time feature extraction, while GPUs handle matrix operations, reducing overall power consumption by 35%13.

🔍 IV. Key Performance Comparison

Scenario

FPGA Solution Advantages

Compared to GPU Performance

Sparse Neural Network Inference

3x energy efficiency improvement, supports dynamic sparse compression

Outperforms Titan X Pascal6

Low-Latency Image Processing

Nanosecond-level pipeline response

Superior to GPU batch processing mode8

Hybrid Inference System

Task-level energy efficiency optimization, 40% latency reduction

Outperforms pure GPU solutions10

⚠️ V. Implementation Limitations

- Development Ecosystem Shortcomings: Lacks a general programming framework similar to CUDA, requiring reliance on OpenCL or manual RTL optimization28.

- Commercial Applicability: FPGA unit prices are 4-8 times that of equivalent GPUs, making them suitable only for specialized scenarios (e.g., military, medical imaging)113.

Case studies show: FPGAs can surpass GPU efficiency in specific compute-intensive tasks (such as custom graphics pipelines, sparse AI inference), but require deep software and hardware co-optimization and do not yet cover the general graphics computing ecosystem36.