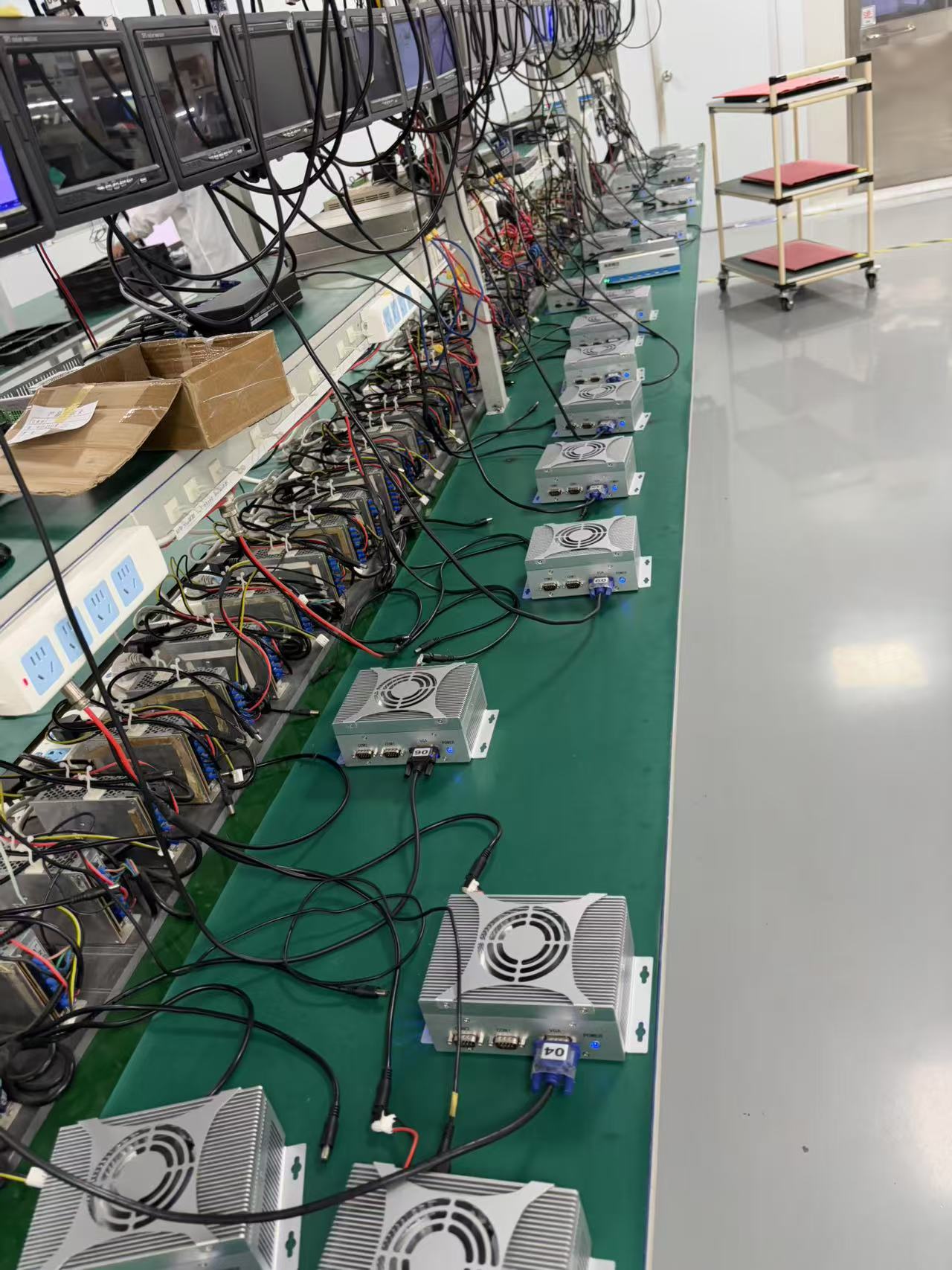

RK3588+MCU Robot Controller Solution

The following is a detailed analysis of a robot controller solution based on RK3588 + MCU, combining high-performance computing with real-time control requirements:

I. Core Hardware Architecture

-

RK3588 Main Control Unit

- Features a big.LITTLE architecture with 4x Cortex-A76 (2.4GHz) + 4x Cortex-A55 (2.0GHz) cores, equipped with 6TOPS NPU computing power, supporting real-time inference for AI models like YOLOv5s (49fps@1080p)1.

- Integrates a Mali-G610 GPU, supporting 4K@120fps display output to meet complex HMI interaction requirements2.

-

MCU Collaborative Control

- Connects to industrial-grade MCUs like STM32H7 via SPI/I2C interfaces, achieving microsecond-level real-time control (e.g., servo motor PWM output), forming an AMP heterogeneous computing architecture with RK35883.

- Typical application: MCU processes encoder signals (1MHz sampling rate), RK3588 runs SLAM algorithms (mapping frequency 30Hz)1.

-

Expansion Interface Configuration

- Supports PCIe 3.0 x4 (8Gbps/Lane) connection to FPGA, accelerating LiDAR point cloud processing (latency <5ms)4.

- Native dual Gigabit Ethernet ports enable EtherCAT master functionality, supporting 32-axis synchronous control (jitter <1μs)3.

II. Software System Design

-

Real-time Operating System

-

Utilizes Linux 6.1 + RT-Preempt patch or ROS 2 Galactic, with task scheduling jitter <10μs, supporting multi-sensor data fusion (IMU/vision/LiDAR)3.

-

Example code (EtherCAT master configuration):

cCopy Code

#include <ethercat.h> void ecat_init() { ecat_master_config_t cfg = {.slave_count=8}; ecat_master_init(&cfg); }

-

-

AI Algorithm Deployment

- NPU supports TensorRT quantized models, achieving YOLOv5s detection accuracy of 75.2% mAP@COCO, with a 3x increase in inference speed1.

- 3D vision perception solution: Integrates dToF LiDAR (ranging accuracy ±1cm), supporting dynamic obstacle avoidance (response time <50ms)3.

III. Typical Application Scenarios

-

Industrial Robots

- 6-axis collaborative robots: RK3588 handles visual guidance (positioning accuracy 0.1mm), MCU performs joint control (cycle 1ms)4.

- Case study: Automotive welding line, multi-robot collaboration improves efficiency by 40%1.

-

AGV/AMR

- Supports multi-robot scheduling (100+ unit clusters), reducing path conflict rate by 60%1.

- Dynamic obstacle avoidance: LiDAR + vision fusion solution (minimum detection distance 0.5m)3.

-

Service Robots

- Offline voice interaction: 4-microphone array + NPU-accelerated wake-word recognition (false wake-up rate <0.1%)3.

- Video components:

IV. Performance Comparison

Metric

Traditional x86 Solution

RK3588+MCU Solution

Real-time Control Cycle

500μs level

<10μs level3

Multi-protocol Compatibility

Requires protocol conversion card

Native support for EtherCAT/CANopen4

Axis Control Expansion Capability

Max 4 axes

Expandable to 32 axes3

Localization Rate

Relies on imported chips

100% domestic chips5

This solution achieves a balance between performance and real-time capability through heterogeneous computing, making it suitable for highly dynamic industrial scenarios.