AI Edge Computing Box + AI Algorithm Integrated Hardware-Software Solution for Smart Transportation

Urban traffic networks are the circulatory system of a modern city—moving people, goods, and emergency services while simultaneously generating enormous volumes of unstructured video data that no human operator team can monitor at scale. Sienovo's AI Edge Computing Box, paired with an integrated hardware-software stack purpose-built for smart transportation, addresses this challenge by bringing real-time inference directly to the roadside edge, eliminating the latency and bandwidth cost of sending raw video to a central data center before acting on it.

The Problem: Traditional Traffic Enforcement at Scale

Conventional traffic management relies on fixed cameras whose footage is shipped to back-end servers for analysis—often minutes or hours after an event. By the time a violation is flagged, the vehicle is long gone and the congestion has worsened. Enforcing regulations against over-length loads, overweight trucks, and speeding requires either dedicated weigh stations (capital-intensive, easily avoided) or a sufficiently intelligent edge device that can make classification decisions in milliseconds and trigger downstream workflows immediately.

Beyond enforcement, urban roads must also handle softer but equally important safety signals: a driver distracted by a phone call, a vehicle drifting across lanes without signaling, a pedestrian stepping into fast-moving traffic, or an incident blocking an emergency shoulder lane. These events are time-critical; a response measured in seconds matters.

Sienovo's Integrated Approach

As an indispensable component of new infrastructure, Sienovo leverages cutting-edge AI technology to enable efficient traffic management—specifically, enforcement of over-limit regulations (over-length, over-weight, over-speed) and violation control (illegal parking, road space encroachment). By integrating mature video structuring models for vehicle analysis, the solution delivers real-time, dynamic, comprehensive traffic management, establishing a unified "single view" of smart city transportation to enhance productivity, maintain stability, improve efficiency, and increase public well-being.

The architecture centers on an AI edge computing box deployed at the roadside or gantry, running the full inference pipeline locally. The box ingests live camera streams, runs the detection models, structures the output into metadata events (vehicle class, plate, behavior, timestamp, GPS coordinates), and forwards only those structured records—not raw video—to the cloud management platform. This design dramatically reduces uplink bandwidth requirements and keeps sensitive imagery on-premise.

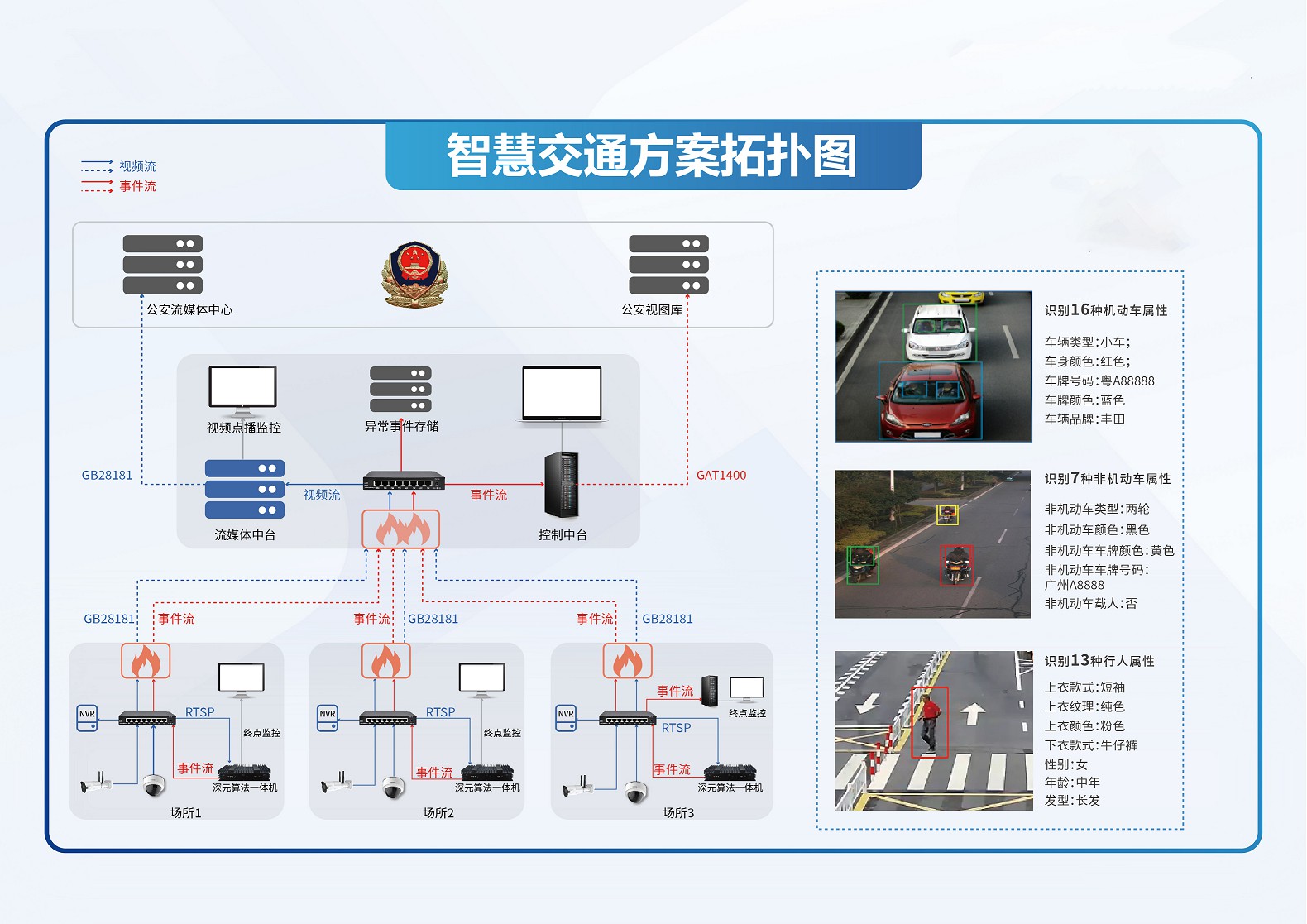

Solution Topology

The diagram below illustrates the full deployment topology, from edge camera clusters through the AI box and out to the unified management dashboard.

Detection Capabilities

The on-device AI stack covers eight core traffic-intelligence scenarios:

Phone use while driving — Detects a driver holding or looking at a handheld device by analyzing posture and hand position relative to the steering wheel, triggering an alert and capturing an evidentiary image suitable for enforcement.

Lane change detection — Tracks vehicle trajectory across lane markings to identify abrupt or unsignaled lane changes, useful both for safety monitoring and for feeding traffic-flow models.

Pedestrian detection — Identifies pedestrians entering roadways, crosswalks, or restricted zones, enabling both real-time alerts and post-event analytics on pedestrian-vehicle conflict points.

Emergency lane violation — Monitors shoulder and emergency lanes for vehicles that occupy them outside permitted conditions, a common contributor to secondary accidents during incidents.

Congestion detection — Aggregates vehicle density and speed across a monitored segment to classify congestion level in real time, feeding adaptive signal control systems or variable message signs.

Accident detection — Identifies stopped or abnormally positioned vehicles, sudden deceleration events, and stationary clusters indicative of a collision, triggering immediate notification to traffic management centers and emergency dispatch.

Illegal vehicle parking detection — Detects vehicles parked in no-stopping zones, bus stops, fire lanes, and crosswalks, with time-stamped evidence capture for enforcement workflows.

License plate recognition (LPR) — Reads plates across a wide range of conditions (angle, occlusion, motion blur, night-time IR illumination), providing the vehicle identity anchor that links behavioral detections to registered owner records and watchlists.

Smart Transportation Box Management Platform

All edge nodes report into a centralized management platform that aggregates events, visualizes them on a geo-referenced map layer, and provides operators with a unified dashboard—the "single view" of city-wide traffic conditions.

The platform ties together the structured event streams from all deployed boxes, enabling operators to drill from city-level heat maps down to individual incident clips, manage enforcement workflows, and generate compliance and safety reports. Because the edge boxes handle inference locally, the platform receives lightweight metadata rather than continuous high-definition video streams, keeping storage and egress costs predictable even as the deployment scales across hundreds of intersections.

Edge AI as the Enabling Layer

The viability of this solution hinges on the AI edge computing box having sufficient on-device compute to run multiple concurrent deep-learning models against multiple simultaneous camera streams—without cloud round-trips introducing unacceptable latency. Sienovo's hardware is designed around this requirement, pairing an inference-optimized SoC with the ruggedized enclosure, thermal management, and connectivity interfaces demanded by outdoor traffic infrastructure deployments.

By combining targeted AI models for each detection scenario with a mature video structuring pipeline, the system converts raw camera feeds into actionable intelligence at the point of capture. This is the foundation of smart city traffic governance: not more cameras, but cameras that understand what they see.